© 2020 Neo4j, Inc.,

License: Creative Commons 4.0

This is the SDN/RX manual version 1.1.1.

It contains excerpts of the shared Spring Data Commons documentation, adapted to contain only supported features and annotation.

Who should read this?

This manual is written for:

-

the enterprise architect investigating Spring integration for Neo4j.

-

the engineer developing Spring Data based applications with Neo4j.

1. Introduction

1.1. Your way through this document

If you already familiar with the core concepts of Spring Data, head straight to Chapter 4. This chapter will walk you through different options of configuring an application to connect to a Neo4j instance and how to model your domain.

In most cases, you will need a domain. Go to Chapter 5 to learn about how to map nodes and relationships to your domain model.

After that, you will need some means to query the domain. Choices are Neo4j repositories, the Neo4j Template or on a lower level, the Neo4j Client. All of them are available in a reactive fashion as well. Apart from the paging mechanism, all the features of standard repositories are available in the reactive variant.

You will find the building blocks in the next chapter.

To learn more about the general concepts of repositories, head over to Chapter 6.

You can of course read on, continuing with the preface and a gentle getting started guide.

2. Preface

2.1. NoSQL and Graph databases

A graph database is a storage engine that is specialized in storing and retrieving vast networks of information. It efficiently stores data as nodes with relationships to other or even the same nodes, thus allowing high-performance retrieval and querying of those structures. Properties can be added to both nodes and relationships. Nodes can be labelled by zero or more labels, relationships are always directed and named.

Graph databases are well suited for storing most kinds of domain models. In almost all domains, there are certain things connected to other things. In most other modeling approaches, the relationships between things are reduced to a single link without identity and attributes. Graph databases allow to keep the rich relationships that originate from the domain equally well-represented in the database without resorting to also modeling the relationships as "things". There is very little "impedance mismatch" when putting real-life domains into a graph database.

2.1.1. Introducing Neo4j

Neo4j is an open source NoSQL graph database. It is a fully transactional database (ACID) that stores data structured as graphs consisting of nodes, connected by relationships. Inspired by the structure of the real world, it allows for high query performance on complex data, while remaining intuitive and simple for the developer.

The starting point for learning about Neo4j is neo4j.com. Here is a list of useful resources:

-

The Neo4j documentation introduces Neo4j and contains links to getting started guides, reference documentation and tutorials.

-

The online sandbox provides a convenient way to interact with a Neo4j instance in combination with the online tutorial.

-

Neo4j Java Bolt Driver

2.2. Spring and Spring Data

Spring Data uses Spring Framework’s core functionality, such as the IoC container, type conversion system, expression language, JMX integration, and portable DAO exception hierarchy. While it is not necessary to know all the Spring APIs, understanding the concepts behind them is. At a minimum, the idea behind IoC should be familiar.

The Spring Data Neo4j project applies Spring Data concepts to the development of solutions using the Neo4j graph data store. We provide repositories as a high-level abstraction for storing and querying documents as well as templates and clients for generic domain access or generic query execution. All of them are integrated with Spring’s application transactions.

The core functionality of the Neo4j support can be used directly, through either the Neo4jClient or the Neo4jTemplate or the reactive variants thereof.

All of them provide integration with Spring’s application level transactions.

On a lower level, you can grab the Bolt driver instance, but than you have to manage your own transactions.

To learn more about Spring, you can refer to the comprehensive documentation that explains in detail the Spring Framework. There are a lot of articles, blog entries and books on the matter - take a look at the Spring Framework home page for more information.

2.3. What is Spring Data Neo4j⚡️RX

Spring Data Neo4j⚡RX is the successor to Spring Data Neo4j + Neo4j-OGM. The separate layer of Neo4j-OGM (Neo4j Object Graph Mapper) has been replaced by Spring infrastructure, but the basic concepts of an Object Graph Mapper (OGM) still apply:

An OGM maps nodes and relationships in the graph to objects and references in a domain model. Object instances are mapped to nodes while object references are mapped using relationships, or serialized to properties (e.g. references to a Date). JVM primitives are mapped to node or relationship properties. An OGM abstracts the database and provides a convenient way to persist your domain model in the graph and query it without having to use low level drivers directly. It also provides the flexibility to the developer to supply custom queries where the queries generated by SDN/RX are insufficient.

2.3.1. What’s in the box?

Spring Data Neo4j⚡RX or in short SDN/RX is a next-generation Spring Data module, created and maintained by Neo4j, Inc. in close collaboration with Pivotal’s Spring Data Team.

SDN/RX relies completely on the Neo4j Java Driver, without introducing another "driver" or "transport" layer between the mapping framework and the driver. The Neo4j Java Driver - sometimes dubbed Bolt or the Bolt driver - is used as a protocol much like JDBC is with relational databases.

Noteworthy features that differentiate SDN/RX from Spring Data Neo4j + OGM are

-

Full support for immutable entities and thus full support for Kotlin’s data classes

-

Full support for the reactive programming model in the Spring Framework itself and Spring Data

-

Brand new Neo4j client and reactive client feature, resurrecting the idea of a template over the plain driver, easing database access

SDN/RX is currently developed with Spring Data Neo4j in parallel and will replace it eventually when they are on feature parity in regards of repository support and mapping.

2.3.2. Why should I use SDN/RX in favor of SDN+OGM

SDN/RX has several features not present in SDN+OGM, notably

-

Full support for Springs reactive story, including reactive transaction

-

Full support for Query By Example

-

Full support for fully immutable entities

-

Support for all modifiers and variations of derived finder methods, including spatial queries

2.3.3. Do I need both SDN/RX and Spring Data Neo4j?

No.

They are mutually exclusive and you cannot mix them in one project.

2.3.4. How does SDN/RX relate to Neo4j-OGM?

Neo4j-OGM is an Object Graph Mapping library, which is mainly used by Spring Data Neo4j as its backend for the heavy lifting of mapping nodes and relationships into domain object. SDN/RX does not need and does not support Neo4j-OGM. SDN/RX uses Spring Data’s mapping context exclusively for scanning classes and building the meta model.

While this pins SDN/RX to the Spring eco systems, it has several advantages, among them the smaller footprint in regards of CPU and memory usage and especially, all the features of Springs mapping context.

2.3.6. Does SDN/RX support embedded Neo4j?

Embedded Neo4j has multiple facets to it:

Does SDN/RX interact directly with an embedded instance?

No.

An embedded database is usually represented by an instance of org.neo4j.graphdb.GraphDatabaseService and has no Bolt connector out of the box.

SDN/RX can however work very much with Neo4j’s test harness, the test harness is specially meant to be a drop-in replacement for the real database.

Support for both Neo4j 3.5 and 4.0 test harness is implemented via the Spring Boot starter for the driver.

Have a look at the corresponding module org.neo4j.driver:neo4j-java-driver-test-harness-spring-boot-autoconfigure.

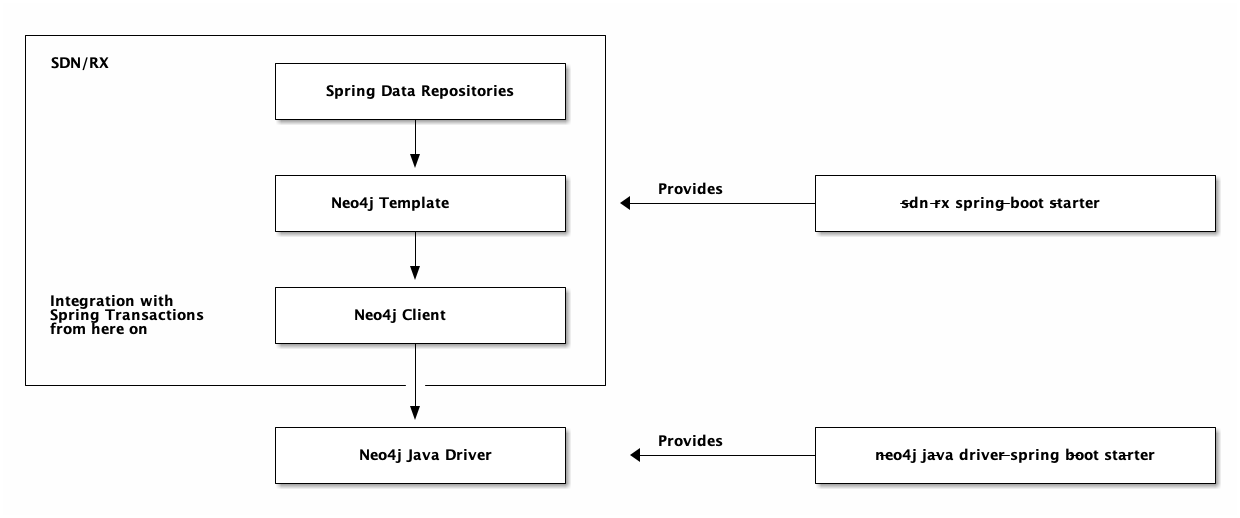

3. Building blocks

SDN/RX consists of compossible building blocks.

It builds on top of the Neo4j Java Driver.

The instance of the Java driver is provided by our starter.

All configuration options of the driver can be configured through the starter in the namespace org.neo4j.driver.

The driver bean provides imperative, asynchronous and reactive methods to interact with Neo4j.

You can use all transaction methods the driver provides on that bean such as auto-commit transactions, transaction functions and unmanaged transactions. Be aware that those transactions are not tight to an ongoing Spring transaction.

Integration with Spring Data and Spring’s platform or reactive transaction manager starts at the Neo4j Client.

The client is part of SDN/RX and SDN/RX is configured through a separate starter, spring-data-neo4j-rx-spring-boot-starter.

The configuration namespace of that starter is org.neo4j.data.

The client is mapping agnostic. It doesn’t know about your domain classes and you are responsible for mapping a result to an object suiting your needs.

The next higher level of abstraction is the Neo4j Template. It is aware of your domain and you can use it to query arbitrary domain objects. The template comes in handy in scenarios with a large number of domain classes or custom queries for which you don’t want to create an additional repository abstraction each.

The highest level of abstraction is a Spring Data repository.

All abstractions of SDN/RX come in both imperative and reactive fashions. It is not recommend to mix both programming styles in the same application. The reactive infrastructure requires a Neo4j 4.0+ database.

4. Getting started

We provide a Spring Boot starter for SDN/RX.

Please include the starter module via your dependency management and configure the bolt URL to use, for example org.neo4j.driver.uri=bolt://localhost:7687.

The starter assumes that the server has disabled authentication.

As the SDN/RX starter depends on the starter for the Java Driver, all things regarding configuration said there, apply here as well.

For a reference of the available properties, use your IDEs autocompletion in the org.neo4j.driver namespace

or look at the dedicated manual.

SDN/RX supports

-

The well known and understood imperative programming model (much like Spring Data JDBC or JPA)

-

Reactive programming based on Reactive Streams, including full support for reactive transactions.

Those are all included in the same binary. The reactive programming model requires a 4.0 Neo4j server on the database side and reactive Spring on the other hand. Have a look at the examples directory for all examples.

4.1. Prepare the database

For this example, we stay within the movie graph, as it comes for free with every Neo4j instance.

If you don’t have a running database but Docker installed, please run:

docker run --publish=7474:7474 --publish=7687:7687 -e 'NEO4J_AUTH=neo4j/secret' neo4j:4.0.4You know can access http://localhost:7474.

The above command sets the password of the server to secret.

Note the command ready to run in the prompt (:play movies).

Execute it to fill your database with some test data.

4.2. Create a new Spring Boot project

The easiest way to setup a Spring Boot project is start.spring.io (which is integrated in the major IDEs as well, in case you don’t want to use the website).

Select the "Spring Web Starter" to get all the dependencies needed for creating a Spring based web application. The Spring Initializr will take care of creating a valid project structure for you, with all the files and settings in place for the selected build tool.

| Don’t choose Spring Data Neo4j here, as it will get you the previous generation of Spring Data Neo4j including OGM and additional abstraction over the driver. |

You might want to follow this link for a preconfigured setup.

4.2.1. Using Maven

You can issue a curl request against the Spring Initializer to create a basic Maven project:

curl https://start.spring.io/starter.tgz \

-d dependencies=webflux,actuator \

-d bootVersion=2.3.0.RELEASE \

-d baseDir=Neo4jSpringBootExample \

-d name=Neo4j%20SpringBoot%20Example | tar -xzvf -This will create a new folder Neo4jSpringBootExample.

As this starter is not yet on the initializer, you will have to add the following dependency manually to your pom.xml:

<dependency>

<groupId>org.neo4j.springframework.data</groupId>

<artifactId>spring-data-neo4j-rx-spring-boot-starter</artifactId>

<version>1.1.1</version>

</dependency>You would also add the dependency manually in case of an existing project.

4.2.2. Using Gradle

The idea is the same, just generate a Gradle project:

curl https://start.spring.io/starter.tgz \

-d dependencies=webflux,actuator \

-d type=gradle-project \

-d bootVersion=2.3.0.RELEASE \

-d baseDir=Neo4jSpringBootExampleGradle \

-d name=Neo4j%20SpringBoot%20Example | tar -xzvf -The dependency for Gradle looks like this and must be added to build.gradle:

dependencies {

implementation 'org.neo4j.springframework.data:spring-data-neo4j-rx-spring-boot-starter:1.1.1'

}You would also add the dependency manually in case of an existing project.

4.3. Configure the project

Now open any of those projects in your favorite IDE.

Find application.properties and configure your Neo4j credentials:

org.neo4j.driver.uri=bolt://localhost:7687

org.neo4j.driver.authentication.username=neo4j

org.neo4j.driver.authentication.password=secretThis is the bare minimum of what you need to connect to a Neo4j instance.

| It is not necessary to add any programmatically configuration of the driver when you use this starter. SDN/RX repositories will be automatically enabled by this starter. |

4.4. Create your domain

Our domain layer should accomplish two things:

-

Map your graph to objects

-

Provide access to those

4.4.1. Example Node-Entity

SDN/RX fully supports unmodifiable entities, for both Java and data classes in Kotlin.

Therefor we will focus on immutable entities here, Listing 6 shows a such an entity.

| SDN/RX supports all data types the Neo4j Java Driver supports, see Map Neo4j types to native language types inside the chapter "The Cypher type system". Future versions will support additional converters. |

import static org.neo4j.springframework.data.core.schema.Relationship.Direction.*;

import java.util.ArrayList;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import org.neo4j.springframework.data.core.schema.Id;

import org.neo4j.springframework.data.core.schema.Node;

import org.neo4j.springframework.data.core.schema.Property;

import org.neo4j.springframework.data.core.schema.Relationship;

@Node("Movie") (1)

public class MovieEntity {

@Id (2)

private final String title;

@Property("tagline") (3)

private final String description;

@Relationship(type = "ACTED_IN", direction = INCOMING) (4)

private Map<PersonEntity, Roles> actorsAndRoles = new HashMap<>();

@Relationship(type = "DIRECTED", direction = INCOMING)

private List<PersonEntity> directors = new ArrayList<>();

public MovieEntity(String title, String description) { (5)

this.title = title;

this.description = description;

}

// Getters omitted for brevity

}| 1 | @Node is used to mark this class as a managed entity.

It also is used to configure the Neo4j label.

The label defaults to the name of the class, if you’re just using plain @Node. |

| 2 | Each entity has to have an id.

The movie class shown here uses the attribute title as a unique business key.

If you don’t have such a unique key, you can use the combination of @Id and @GeneratedValue

to configure SDN/RX to use Neo4j’s internal id.

We also provide generators for UUIDs. |

| 3 | This shows @Property as a way to use a different name for the field than for the graph property. |

| 4 | This defines a relationship to a class of type PersonEntity and the relationship type ACTED_IN |

| 5 | This is the constructor to be used by your application code. |

As a general remark: Immutable entities using internally generated ids are a bit contradictory, as SDN/RX needs a way to set the field with the value generated by the database.

If you don’t find a good business key or don’t want to use a generator for IDs, here’s the same entity using the internally generated id together with a businesses constructor and a so called wither-Method, that is used by SDN/RX:

import org.neo4j.springframework.data.core.schema.GeneratedValue;

import org.neo4j.springframework.data.core.schema.Id;

import org.neo4j.springframework.data.core.schema.Node;

import org.neo4j.springframework.data.core.schema.Property;

import org.springframework.data.annotation.PersistenceConstructor;

@Node("Movie")

public class MovieEntity {

@Id @GeneratedValue

private Long id;

private final String title;

@Property("tagline")

private final String description;

public MovieEntity(String title, String description) { (1)

this.id = null;

this.title = title;

this.description = description;

}

public MovieEntity withId(Long id) { (2)

if (this.id.equals(id)) {

return this;

} else {

MovieEntity newObject = new MovieEntity(this.title, this.description);

newObject.id = id;

return newObject;

}

}

}| 1 | This is the constructor to be used by your application code. It sets the id to null, as the field containing the internal id should never be manipulated. |

| 2 | This is a so-called wither for the id-attribute.

It creates a new entity and sets the field accordingly,

without modifying the original entity, thus making it immutable. |

You can of course use SDN/RX with Kotlin and model your domain with Kotlin’s data classes. Project Lombok is an alternative if you want or need to stay purely within Java.

4.4.2. Declaring Spring Data repositories

You basically have two options here: You can work store agnostic with SDN/RX and make your domain specific extends one of

-

org.springframework.data.repository.Repository -

org.springframework.data.repository.CrudRepository -

org.springframework.data.repository.reactive.ReactiveCrudRepository -

org.springframework.data.repository.reactive.ReactiveSortingRepository

Choose imperative and reactive accordingly.

| While technically not prohibited, it is not recommended to mix imperative and reactive database access in the same application. We won’t support you with scenarios like this. |

The other option is to settle on a store specific implementation and gain all the methods we support out of the box. The advantage of this approach is also it’s biggest disadvantage: Once out, all those methods will be part of your API. Most of the time it’s harder to take something away, than to add stuff afterwards. Furthermore, using store specifics leaks your store into your domain. From a performance point of view, there is no penalty.

A repository fitting to any of the movie entities above looks like this:

import reactor.core.publisher.Mono;

import org.neo4j.springframework.data.repository.ReactiveNeo4jRepository;

public interface MovieRepository extends ReactiveNeo4jRepository<MovieEntity, String> {

Mono<MovieEntity> findOneByTitle(String title);

}This repository can be used in any Spring component like this:

import reactor.core.publisher.Flux;

import reactor.core.publisher.Mono;

import org.neo4j.springframework.data.examples.spring_boot.domain.MovieEntity;

import org.neo4j.springframework.data.examples.spring_boot.domain.MovieRepository;

import org.springframework.http.MediaType;

import org.springframework.web.bind.annotation.DeleteMapping;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.PathVariable;

import org.springframework.web.bind.annotation.PutMapping;

import org.springframework.web.bind.annotation.RequestBody;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.bind.annotation.RestController;

@RestController

@RequestMapping("/movies")

public class MovieController {

private final MovieRepository movieRepository;

public MovieController(MovieRepository movieRepository) {

this.movieRepository = movieRepository;

}

@PutMapping

Mono<MovieEntity> createOrUpdateMovie(@RequestBody MovieEntity newMovie) {

return movieRepository.save(newMovie);

}

@GetMapping(value = { "", "/" }, produces = MediaType.TEXT_EVENT_STREAM_VALUE)

Flux<MovieEntity> getMovies() {

return movieRepository

.findAll();

}

@GetMapping("/by-title")

Mono<MovieEntity> byTitle(@RequestParam String title) {

return movieRepository.findOneByTitle(title);

}

@DeleteMapping("/{id}")

Mono<Void> delete(@PathVariable String id) {

return movieRepository.deleteById(id);

}

}

Testing reactive code is done with a reactor.test.StepVerifier.

Have a look at the corresponding documentation of Project Reactor or see our example code.

|

5. Object Mapping

The following sections will explain the process of mapping between your graph and your domain. It is split into two parts. The first part explains the actual mapping and the available tools for you to describe how to map nodes, relationships and properties to objects. The second part will have a look at Spring Data’s object mapping fundamentals. It gives valuable tips on general mapping, why you should prefer immutable domain objects and how you can model them with Java or Kotlin.

5.1. Metadata-based Mapping

To take full advantage of the object mapping functionality inside SDN/RX, you should annotate your mapped objects with the @Node annotation.

Although it is not necessary for the mapping framework to have this annotation (your POJOs are mapped correctly, even without any annotations),

it lets the classpath scanner find and pre-process your domain objects to extract the necessary metadata.

If you do not use this annotation, your application takes a slight performance hit the first time you store a domain object,

because the mapping framework needs to build up its internal metadata model so that it knows about the properties of your domain object and how to persist them.

5.1.1. Mapping Annotation Overview

From SDN/RX:

-

@Node: Applied at the class level to indicate this class is a candidate for mapping to the database. -

@Id: Applied at the field level to mark the field used for identity purpose. -

@GeneratedValue: Applied at the field level together with@Idto specify how unique identifiers should be generated. -

@Property: Applied at the field level to modify the mapping from attributes to properties. -

@Relationship: Applied at the field level to specify the details of a relationship. -

@DynamicLabels: Applied at the field level to specify the source of dynamic labels.

From Spring Data commons

-

@org.springframework.data.annotation.Idsame as@Idfrom SDN/RX, in fact,@Idis annotated with Spring Data Common’s Id-annotation. -

@CreatedBy: Applied at the field level to indicate the creator of a node. -

@CreatedDate: Applied at the field level to indicate the creation date of a node. -

@LastModifiedBy: Applied at the field level to indicate the author of the last change to a node. -

@LastModifiedDate: Applied at the field level to indicate the last modification date of a node. -

@PersistenceConstructor: Applied at one constructor to mark it as a the preferred constructor when reading entities. -

@Persistent: Applied at the class level to indicate this class is a candidate for mapping to the database. -

@Version: Applied at field level is used for optimistic locking and checked for modification on save operations. The initial value is zero which is bumped automatically on every update.

Have a look at Chapter 9 for all annotations regarding auditing support.

5.1.2. The basic building block: @Node

The @Node annotation is used to mark a class as a managed domain class, subject to the classpath scanning by the mapping context.

To map an Object to nodes in the graph and vice versa, we need a label to identify the class to map to and from.

@Node has an attribute labels that allows you to configure one or more labels to be used when reading and writing instances of the annotated class.

The value attribute is an alias for labels.

If you don’t specify a label, than the simple class name will be used as the primary label.

In case you want to provide multiple labels, you could either:

-

Supply an array to the

labelsproperty. The first element in the array will considered as the primary label. -

Supply a value for

primaryLabeland put the additional labels inlabels.

The primary label should always be the most concrete label that reflects your domain class.

For each instance of an annotated class that is written through a repository or through the Neo4j template, one node in the graph with at least the primary label will be written. Vice versa, all nodes with the primary label will be mapped to the instances of the annotated class.

The @Node annotation is not inherited from super-types and interfaces.

You can however annotate your domain classes individually at every inheritance level.

This allows polymorphic queries: You can pass in base or intermediate classes and retrieve the correct, concrete instance for your nodes.

This is only supported for abstract bases annotated with @Node.

The labels defined on such a class will be used as additional labels together with the labels of the concrete implementations.

Dynamic or "runtime" managed labels

All labels implicitly defined through the simple class name or explicitly via the @Node annotation are static.

They cannot be changed during runtime.

If you need additional labels that can be manipulated during runtime, you can use @DynamicLabels.

@DynamicLabels is an annotation on field level and marks an attribute of type java.util.Collection<String> (a List or Set) for example) as source of dynamic labels.

If this annotation is present, all labels present on a node and not statically mapped via @Node and the class names, will be collected into that collection during load.

During writes, all labels of the node will be replaced with the statically defined labels plus the contents of the collection.

If you have other applications add additional labels to nodes, don’t use @DynamicLabels.

If @DynamicLabels is present on a managed entity, the resulting set of labels will be "the truth" written to the database.

|

5.1.3. Identifying instances: @Id

While @Node creates a mapping between a class and nodes having a specific label,

we also need to make the connection between individual instances of that class (objects) and instances of the node.

This is where @Id comes into play.

@Id marks an attribute of the class to be the unique identifier of the object.

That unique identifier is in an optimal world a unique business key or in other words, a natural key.

@Id can be used on all attributes with a supported simple type.

Natural keys are however pretty hard to find. Peoples names for example are seldom unique, change over time or worse, not everyone has a first and last name.

We therefore support two different kind of surrogate keys.

On an attribute of type long or Long, @Id can be used with @GeneratedValue.

This maps the Neo4j internal id, which is not a property on a node or relationship and usually not visible,

to the attribute and allows SDN/RX to retrieve individual instances of the class.

@GeneratedValue provides the attribute generatorClass.

generatorClass can be used to specify a class implementing IdGenerator.

An IdGenerator is a functional interface and its generateId takes the primary label and the instance to generate an Id for.

We support UUIDStringGenerator as one implementation out of the box.

You can also specify a Spring Bean from the application context on @GeneratedValue via generatorRef.

That bean also needs to implement IdGenerator, but can make use of everything in the context, including the Neo4j client or template to interact with the database.

| Don’t skip the important notes about ID handling in Section 5.2 |

5.1.4. Mapping properties: @Property

All attributes of a @Node-annotated class will be persisted as properties of Neo4j nodes and relationships.

Without further configuration, the name of the attribute in the Java or Kotlin class will be used as Neo4j property.

If you are working with an existing Neo4j schema or just like to adapt the mapping to your needs, you will need to use @Property.

The name is used to specify the name of the property inside the database.

5.1.5. Connecting nodes: @Relationship

The @Relationship annotation can be used on all attributes that are not a simple type.

It is applicable on attributes of other types annotated with @Node or collections and maps thereof.

The type or the value attribute allow configuration of the relationship’s type, direction allows specifying the direction.

The default direction in SDN/RX is Relationship.Direction#OUTGOING.

We support dynamic relationships.

Dynamic relationships are represented as a Map<String, AnnotatedDomainClass>.

In such a case, the type of the relationship to the other domain class is given by the maps key and must not be configured through the @Relationship.

Map relationship properties

Neo4j supports defining properties not only on nodes but also on relationships.

To express those properties in the model SDN/RX provides @RelationshipProperties to be applied on a simple Java class.

In the entity class the relationship can be modelled as before but its type has to be a Map with the related node as the key and the relation property class as value.

A relationship property class and its usage may look like this:

Roles@RelationshipProperties

public class Roles {

private final List<String> roles;

public Roles(List<String> roles) {

this.roles = roles;

}

public List<String> getRoles() {

return roles;

}

}private Map<PersonEntity, Roles> actorsAndRoles = new HashMap<>();Relationship query limit

In general there is no limitation of relationships / hops for creating the queries. SDN/RX parses the whole reachable graph from your modelled nodes. It is possible to have self-referencing entities and self-referencing concrete instances.

If these entities or instances for a cycle we have a strict limit of 2 repetitions of walking the same path through the graph.

E.g. You model a social network of (:Person)-[:HAS_FRIEND]→(:Person)-[:HAS_FRIEND]… you will only get the friends of the second degree.

If you need a more specific mapping for your domain we advise you to use custom queries.

5.1.6. A complete example

Putting all those together, we can create a simple domain. We use movies and people with different roles:

MovieEntityimport static org.neo4j.springframework.data.core.schema.Relationship.Direction.*;

import java.util.ArrayList;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import org.neo4j.springframework.data.core.schema.Id;

import org.neo4j.springframework.data.core.schema.Node;

import org.neo4j.springframework.data.core.schema.Property;

import org.neo4j.springframework.data.core.schema.Relationship;

@Node("Movie") (1)

public class MovieEntity {

@Id (2)

private final String title;

@Property("tagline") (3)

private final String description;

@Relationship(type = "ACTED_IN", direction = INCOMING) (4)

private Map<PersonEntity, Roles> actorsAndRoles = new HashMap<>();

@Relationship(type = "DIRECTED", direction = INCOMING)

private List<PersonEntity> directors = new ArrayList<>();

public MovieEntity(String title, String description) { (5)

this.title = title;

this.description = description;

}

// Getters omitted for brevity

}| 1 | @Node is used to mark this class as a managed entity.

It also is used to configure the Neo4j label.

The label defaults to the name of the class, if you’re just using plain @Node. |

| 2 | Each entity has to have an id. We use the movie’s name as unique identifier. |

| 3 | This shows @Property as a way to use a different name for the field than for the graph property. |

| 4 | This configures an incoming relationship to a person. |

| 5 | This is the constructor to be used by your application code as well as by SDN/RX. |

People are mapped in two roles here, actors and directors.

The domain class is the same:

PersonEntityimport org.neo4j.springframework.data.core.schema.Id;

import org.neo4j.springframework.data.core.schema.Node;

@Node("Person")

public class PersonEntity {

@Id

private final String name;

private final Integer born;

public PersonEntity(Integer born, String name) {

this.born = born;

this.name = name;

}

public Integer getBorn() {

return born;

}

public String getName() {

return name;

}

}

We haven’t modelled the relationship between movies and people in both direction.

Why is that?

We see the MovieEntity as the aggregate root, owning the relationships.

On the other hand, we want to be able to pull all people from the database without selecting all the movies associated with them.

Please consider your applications use case before you try to map every relationship in your database in every direction.

While you can do this, you may end up rebuilding a graph database inside your object graph and this is not the intention of a mapping framework.

|

5.2. Handling and provisioning of unique IDs

5.2.1. Using the internal Neo4j id

The easiest way to give your domain classes an unique identifier is the combination of @Id and @GeneratedValue

on a field of type Long (preferable the object, not the scalar long, as literal null is the better indicator whether an instance is new or not):

@Node("Movie")

public class MovieEntity {

@Id @GeneratedValue

private Long id;

private String name;

public MovieEntity(String name) {

this.name = name;

}

}You don’t need to provide a setter for the field, SDN/RX will use reflection to assign the field, but use a setter if there is one. If you want to create an immutable entity with an internally generated id, you have to provide a wither.

@Node("Movie")

public class MovieEntity {

@Id @GeneratedValue

private final Long id; (1)

private String name;

public MovieEntity(String name) { (2)

this(null, name);

}

private MovieEntity(Long id, String name) { (3)

this.id = id;

this.name = name;

}

public MovieEntity withId(Long id) { (4)

if (this.id.equals(id)) {

return this;

} else {

return new MovieEntity(id, this.title);

}

}

}| 1 | Immutable final id field indicating a generated value |

| 2 | Public constructor, used by the application and Spring Data |

| 3 | Internally used constructor |

| 4 | This is a so-called wither for the id-attribute.

It creates a new entity and set’s the field accordingly, without modifying the original entity, thus making it immutable. |

You either have to provide a setter for the id attribute or something like a wither, if you want to have

-

Advantages: It is pretty clear that the id attribute is the surrogate business key, it takes no further effort or configuration to use it.

-

Disadvantage: It is tied to Neo4js internal database id, which is not unique to our application entity only over a database lifetime.

-

Disadvantage: It takes more effort to create an immutable entity

5.2.2. Use externally provided surrogate keys

The @GeneratedValue annotation can take a class implementing org.neo4j.springframework.data.core.schema.IdGenerator as parameter.

SDN/RX provides InternalIdGenerator (the default) and UUIDStringGenerator out of the box.

The later generates new UUIDs for each entity and returns them as java.lang.String.

An application entity using that would look like this:

@Node("Movie")

public class MovieEntity {

@Id @GeneratedValue(UUIDStringGenerator.class)

private String id;

private String name;

}We have to discuss two separate things regarding advantages and disadvantages. The assignment itself and the UUID-Strategy. A universally unique identifier is meant to be unique for practical purposes. To quote Wikipedia: “Thus, anyone can create a UUID and use it to identify something with near certainty that the identifier does not duplicate one that has already been, or will be, created to identify something else.” Our strategy uses Java internal UUID mechanism, employing a cryptographically strong pseudo random number generator. In most cases that should work fine, but your mileage might vary.

That leaves the assignment itself:

-

Advantage: The application is in full control and can generate a unique key that is just unique enough for the purpose of the application. The generated value will be stable and there won’t be a need to change it later on.

-

Disadvantage: The generated strategy is applied on the application side of things. In those days most applications will be deployed in more than one instance to scale nicely. If your strategy is prone to generate duplicates than inserts will fail as uniques of the primary key will be violated. So while you don’t have to think about a unique business key in this scenario, you have to think more what to generate.

You have several options to role your own ID generator. One is a POJO implementing a generator:

import java.util.concurrent.atomic.AtomicInteger;

import org.neo4j.springframework.data.core.schema.IdGenerator;

import org.springframework.util.StringUtils;

public class TestSequenceGenerator implements IdGenerator<String> {

private final AtomicInteger sequence = new AtomicInteger(0);

@Override

public String generateId(String primaryLabel, Object entity) {

return StringUtils.uncapitalize(primaryLabel) +

"-" + sequence.incrementAndGet();

}

}Another option is to provide an additional Spring Bean like this:

@Component

class MyIdGenerator implements IdGenerator<String> {

private final Neo4jClient neo4jClient;

public MyIdGenerator(Neo4jClient neo4jClient) {

this.neo4jClient = neo4jClient;

}

@Override

public String generateId(String primaryLabel, Object entity) {

return neo4jClient.query("YOUR CYPHER QUERY FOR THE NEXT ID") (1)

.fetchAs(String.class).one().get();

}

}| 1 | Use exactly the query or logic your need. |

The generator above would be configured as a bean reference like this:

@Node("Movie")

public class MovieEntity {

@Id @GeneratedValue(generatorRef = "myIdGenerator")

private String id;

private String name;

}5.2.3. Using a business key

We have been using a business key in the complete example’s MovieEntity and PersonEntity.

The name of the person is assigned at construction time, both by your application and while being loaded through Spring Data.

This is only possible, if you find a stable, unique business key, but makes great immutable domain objects.

-

Advantages: Using a business or natural key as primary key is natural. The entity in question is clearly identified and it feels most of the time just right in the further modelling of your domain.

-

Disadvantages: Business keys as primary keys will be hard to update once you realise that the key you found is not as stable as you thought. Often it turns out that it can change, even when promised otherwise. Apart from that, finding identifier that are truly unique for a thing is hard.

5.3. Spring Data Object Mapping Fundamentals

This section covers the fundamentals of Spring Data object mapping, object creation, field and property access, mutability and immutability.

Core responsibility of the Spring Data object mapping is to create instances of domain objects and map the store-native data structures onto those. This means we need two fundamental steps:

-

Instance creation by using one of the constructors exposed.

-

Instance population to materialize all exposed properties.

5.3.1. Object creation

Spring Data automatically tries to detect a persistent entity’s constructor to be used to materialize objects of that type. The resolution algorithm works as follows:

-

If there is a no-argument constructor, it will be used. Other constructors will be ignored.

-

If there is a single constructor taking arguments, it will be used.

-

If there are multiple constructors taking arguments, the one to be used by Spring Data will have to be annotated with

@PersistenceConstructor.

The value resolution assumes constructor argument names to match the property names of the entity,

i.e. the resolution will be performed as if the property was to be populated, including all customizations in mapping (different datastore column or field name etc.).

This also requires either parameter names information available in the class file or an @ConstructorProperties annotation being present on the constructor.

5.3.2. Property population

Once an instance of the entity has been created, Spring Data populates all remaining persistent properties of that class. Unless already populated by the entity’s constructor (i.e. consumed through its constructor argument list), the identifier property will be populated first to allow the resolution of cyclic object references. After that, all non-transient properties that have not already been populated by the constructor are set on the entity instance. For that we use the following algorithm:

-

If the property is immutable but exposes a wither method (see below), we use the wither to create a new entity instance with the new property value.

-

If property access (i.e. access through getters and setters) is defined, we are invoking the setter method.

-

By default, we set the field value directly.

Let’s have a look at the following entity:

class Person {

private final @Id Long id; (1)

private final String firstname, lastname; (2)

private final LocalDate birthday;

private final int age; (3)

private String comment; (4)

private @AccessType(Type.PROPERTY) String remarks; (5)

static Person of(String firstname, String lastname, LocalDate birthday) { (6)

return new Person(null, firstname, lastname, birthday,

Period.between(birthday, LocalDate.now()).getYears());

}

Person(Long id, String firstname, String lastname, LocalDate birthday, int age) { (6)

this.id = id;

this.firstname = firstname;

this.lastname = lastname;

this.birthday = birthday;

this.age = age;

}

Person withId(Long id) { (1)

return new Person(id, this.firstname, this.lastname, this.birthday);

}

void setRemarks(String remarks) { (5)

this.remarks = remarks;

}

}| 1 | The identifier property is final but set to null in the constructor.

The class exposes a withId(…) method that’s used to set the identifier, e.g. when an instance is inserted into the datastore and an identifier has been generated.

The original Person instance stays unchanged as a new one is created.

The same pattern is usually applied for other properties that are store managed but might have to be changed for persistence operations. |

| 2 | The firstname and lastname properties are ordinary immutable properties potentially exposed through getters. |

| 3 | The age property is an immutable but derived one from the birthday property.

With the design shown, the database value will trump the defaulting as Spring Data uses the only declared constructor.

Even if the intent is that the calculation should be preferred, it’s important that this constructor also takes age as parameter (to potentially ignore it)

as otherwise the property population step will attempt to set the age field and fail due to it being immutable and no wither being present. |

| 4 | The comment property is mutable is populated by setting its field directly. |

| 5 | The remarks properties are mutable and populated by setting the comment field directly or by invoking the setter method for |

| 6 | The class exposes a factory method and a constructor for object creation.

The core idea here is to use factory methods instead of additional constructors to avoid the need for constructor disambiguation through @PersistenceConstructor.

Instead, defaulting of properties is handled within the factory method. |

5.3.3. General recommendations

-

Try to stick to immutable objects — Immutable objects are straightforward to create as materializing an object is then a matter of calling its constructor only. Also, this avoids your domain objects to be littered with setter methods that allow client code to manipulate the objects state. If you need those, prefer to make them package protected so that they can only be invoked by a limited amount of co-located types. Constructor-only materialization is up to 30% faster than properties population.

-

Provide an all-args constructor — Even if you cannot or don’t want to model your entities as immutable values, there’s still value in providing a constructor that takes all properties of the entity as arguments, including the mutable ones, as this allows the object mapping to skip the property population for optimal performance.

-

Use factory methods instead of overloaded constructors to avoid

@PersistenceConstructor— With an all-argument constructor needed for optimal performance, we usually want to expose more application use case specific constructors that omit things like auto-generated identifiers etc. It’s an established pattern to rather use static factory methods to expose these variants of the all-args constructor. -

Make sure you adhere to the constraints that allow the generated instantiator and property accessor classes to be used

-

For identifiers to be generated, still use a final field in combination with a wither method

-

Use Lombok to avoid boilerplate code — As persistence operations usually require a constructor taking all arguments, their declaration becomes a tedious repetition of boilerplate parameter to field assignments that can best be avoided by using Lombok’s

@AllArgsConstructor.

5.3.4. Kotlin support

Spring Data adapts specifics of Kotlin to allow object creation and mutation.

Kotlin object creation

Kotlin classes are supported to be instantiated , all classes are immutable by default and require explicit property declarations to define mutable properties.

Consider the following data class Person:

data class Person(val id: String, val name: String)The class above compiles to a typical class with an explicit constructor.

We can customize this class by adding another constructor and annotate it with @PersistenceConstructor to indicate a constructor preference:

data class Person(var id: String, val name: String) {

@PersistenceConstructor

constructor(id: String) : this(id, "unknown")

}Kotlin supports parameter optionality by allowing default values to be used if a parameter is not provided.

When Spring Data detects a constructor with parameter defaulting, then it leaves these parameters absent

if the data store does not provide a value (or simply returns null) so Kotlin can apply parameter defaulting.

Consider the following class that applies parameter defaulting for name

data class Person(var id: String, val name: String = "unknown")Every time the name parameter is either not part of the result or its value is null, then the name defaults to unknown.

Property population of Kotlin data classes

In Kotlin, all classes are immutable by default and require explicit property declarations to define mutable properties.

Consider the following data class Person:

data class Person(val id: String, val name: String)This class is effectively immutable.

It allows to create new instances as Kotlin generates a copy(…) method that creates new object instances

copying all property values from the existing object and applying property values provided as arguments to the method.

6. Working with Spring Data Repositories

The goal of the Spring Data repository abstraction is to significantly reduce the amount of boilerplate code required to implement data access layers for various persistence stores.

6.1. Core concepts

The central interface in the Spring Data repository abstraction is Repository.

It takes the domain class to manage as well as the ID type of the domain class as type arguments.

This interface acts primarily as a marker interface to capture the types to work with and to help you to discover interfaces that extend this one.

The CrudRepository provides sophisticated CRUD functionality for the entity class that is being managed.

CrudRepository interfacepublic interface CrudRepository<T, ID> extends Repository<T, ID> {

<S extends T> S save(S entity); (1)

Optional<T> findById(ID primaryKey); (2)

Iterable<T> findAll(); (3)

long count(); (4)

void delete(T entity); (5)

boolean existsById(ID primaryKey); (6)

// … more functionality omitted.

}| 1 | Saves the given entity. |

| 2 | Returns the entity identified by the given ID. |

| 3 | Returns all entities. |

| 4 | Returns the number of entities. |

| 5 | Deletes the given entity. |

| 6 | Indicates whether an entity with the given ID exists. |

We also provide a technology-specific abstraction:

such as Neo4jRepository and the reactive variant, ReactiveNeo4jRepository.

Those interfaces extend CrudRepository and expose the capabilities of the underlying persistence technology

in addition to the rather generic persistence technology-agnostic interfaces such as CrudRepository.

Also, methods returning an Iterable are narrowed down to return a java.util.List instead.

|

On top of the CrudRepository, there is a PagingAndSortingRepository abstraction that adds additional methods to ease paginated access to entities:

PagingAndSortingRepository interfacepublic interface PagingAndSortingRepository<T, ID> extends CrudRepository<T, ID> {

Iterable<T> findAll(Sort sort);

Page<T> findAll(Pageable pageable);

}To access the second page of User by a page size of 20, you could do something like the following:

PagingAndSortingRepository<User, Long> repository = // … get access to a bean

Page<User> users = repository.findAll(PageRequest.of(1, 20));In addition to query methods, query derivation for both count and delete queries is available. The following list shows the interface definition for a derived count query:

interface UserRepository extends CrudRepository<User, Long> {

long countByLastname(String lastname);

}The following list shows the interface definition for a derived delete query:

interface UserRepository extends CrudRepository<User, Long> {

long deleteByLastname(String lastname);

List<User> removeByLastname(String lastname);

}6.2. Query methods

Standard CRUD functionality repositories usually have queries on the underlying datastore. With Spring Data, declaring those queries becomes a four-step process:

-

Declare an interface extending Repository or one of its subinterfaces and type it to the domain class and ID type that it should handle, as shown in the following example:

interface PersonRepository extends Repository<Person, Long> { … } -

Declare query methods on the interface.

interface PersonRepository extends Repository<Person, Long> { List<Person> findByLastname(String lastname); } -

Provide some JavaConfig to set up Spring to create proxy instances for those interfaces.

import org.neo4j.springframework.data.config.AbstractNeo4jConfig; import org.neo4j.springframework.data.repository.config.EnableNeo4jRepositories; import org.springframework.context.annotation.Bean; import org.springframework.context.annotation.Configuration; import org.springframework.transaction.annotation.EnableTransactionManagement; @Configuration (1) @EnableNeo4jRepositories (2) @EnableTransactionManagement (3) public class Config extends AbstractNeo4jConfig { (4) @Bean public Driver driver() { (5) return GraphDatabase.driver("bolt://localhost:7687", AuthTokens.basic("neo4j", "secret")); } }The

Driverbean is required to provide the actual connection to the database.Note that the configuration does not configure a package explicitly, because the package of the annotated class is used by default. To customize the package to scan, use one of the

basePackage…attributes of the@EnableNeo4jRepositories-annotation. -

Inject the repository instance and use it, as shown in the following example:

class SomeClient { private final PersonRepository repository; SomeClient(PersonRepository repository) { this.repository = repository; } void doSomething() { List<Person> persons = repository.findByLastname("Matthews"); } }

The following sections explain each step in detail.

6.3. Defining Repository Interfaces

First, define a domain class-specific repository interface.

The interface must extend Repository and be typed to the domain class and an ID type.

If you want to expose CRUD methods for that domain type, extend CrudRepository instead of Repository.

6.3.1. Fine-tuning Repository Definition

Typically, your repository interface extends Repository, CrudRepository, or PagingAndSortingRepository.

Alternatively, if you do not want to extend Spring Data interfaces, you can also annotate your repository interface with @RepositoryDefinition.

Extending CrudRepository exposes a complete set of methods to manipulate your entities.

If you prefer to be selective about the methods being exposed, copy the methods you want to expose from CrudRepository into your domain repository.

| Doing so lets you define your own abstractions on top of the provided Spring Data Repositories functionality. |

The following example shows how to selectively expose CRUD methods (findById and save, in this case):

@NoRepositoryBean

interface MyBaseRepository<T, ID> extends Repository<T, ID> {

Optional<T> findById(ID id);

<S extends T> S save(S entity);

}

interface UserRepository extends MyBaseRepository<User, Long> {

User findByEmailAddress(EmailAddress emailAddress);

}In the prior example, you defined a common base interface for all your domain repositories and exposed findById(…) as well as save(…).

These methods are routed into the base repository implementation (for the imperative version, the implementation is SimpleNeo4jRepository),

because they match the method signatures in CrudRepository.

So the UserRepository can now save users, find individual users by ID, and trigger a query to find Users by email address.

The intermediate repository interface is annotated with @NoRepositoryBean.

Make sure you add that annotation to all repository interfaces for which Spring Data should not create instances at runtime.

|

6.3.2. Using Repositories with Multiple Spring Data Modules

Using a unique Spring Data module in your application makes things simple, because all repository interfaces in the defined scope are bound to one Spring Data module. Sometimes, applications require using more than one Spring Data module. In such cases, a repository definition must distinguish between persistence technologies. When it detects multiple repository factories on the class path, Spring Data enters strict repository configuration mode. Strict configuration uses details on the repository or the domain class to decide about Spring Data module binding for a repository definition:

-

If the repository definition extends the module-specific repository, then it is a valid candidate for the particular Spring Data module.

-

If the domain class is annotated with the module-specific type annotation, then it is a valid candidate for the particular Spring Data module. Spring Data modules accept either third-party annotations (such as JPA’s

@Entity) or provide their own annotations (such as@Nodefor Spring Data Neo4j⚡RX).

The following example shows a repository that uses module-specific interfaces (JPA in this case):

interface MyRepository extends JpaRepository<User, Long> { }

@NoRepositoryBean

interface MyBaseRepository<T, ID> extends JpaRepository<T, ID> { … }

interface UserRepository extends MyBaseRepository<User, Long> { … }MyRepository and UserRepository extend JpaRepository in their type hierarchy.

They are valid candidates for the Spring Data JPA module.

The following example shows a repository that uses generic interfaces:

interface AmbiguousRepository extends Repository<User, Long> { … }

@NoRepositoryBean

interface MyBaseRepository<T, ID> extends CrudRepository<T, ID> { … }

interface AmbiguousUserRepository extends MyBaseRepository<User, Long> { … }AmbiguousRepository and AmbiguousUserRepository extend only Repository and CrudRepository in their type hierarchy.

While this is perfectly fine when using a unique Spring Data module, multiple modules cannot distinguish to which particular Spring Data these repositories should be bound.

The following example shows a repository that uses domain classes with annotations:

interface PersonRepository extends Repository<Person, Long> { … }

@Entity

class Person { … }

interface UserRepository extends Repository<User, Long> { … }

@Node

class User { … }PersonRepository references Person, which is annotated with the JPA @Entity annotation, so this repository clearly belongs to Spring Data JPA.

UserRepository references User, which is annotated with SDN/RX’s @Node annotation.

The following bad example shows a repository that uses domain classes with mixed annotations:

interface JpaPersonRepository extends Repository<Person, Long> { … }

interface Neo4jPersonRepository extends Repository<Person, Long> { … }

@Entity

@Node

class Person { … }This example shows a domain class using both JPA and Spring Data Neo4j⚡RX annotations.

It defines two repositories, JpaPersonRepository and Neo4jPersonRepository.

One is intended for JPA and the other for Neo4j usage.

Spring Data is no longer able to tell the repositories apart, which leads to undefined behavior.

Repository type details and distinguishing domain class annotations are used for strict repository configuration to identify repository candidates for a particular Spring Data module. Using multiple persistence technology-specific annotations on the same domain type is possible and enables reuse of domain types across multiple persistence technologies. However, Spring Data can then no longer determine a unique module with which to bind the repository.

The last way to distinguish repositories is by scoping repository base packages. Base packages define the starting points for scanning for repository interface definitions, which implies having repository definitions located in the appropriate packages.

The following example shows annotation-driven configuration of base packages:

@Configuration

@EnableJpaRepositories(basePackages = "com.acme.repositories.jpa")

@EnableNeo4jRepositories(basePackages = "com.acme.repositories.neo4j")

class Config {

}6.4. Defining Query Methods

The repository proxy has two ways to derive a store-specific query from the method name:

-

By deriving the query from the method name directly.

-

By using a manually defined query.

The next section describes the available options.

6.4.1. Query Lookup Strategies

The following strategies are available for the repository infrastructure to resolve the query.

In your Java configuration, you can use the queryLookupStrategy attribute of the EnableNeo4jRepositories annotation.

-

CREATEattempts to construct a store-specific query from the query method name. The general approach is to remove a given set of well known prefixes from the method name and parse the rest of the method. You can read more about query construction in “Section 6.4.2”. -

USE_DECLARED_QUERYtries to find a declared query and throws an exception if cannot find one. The query can be defined by an annotation somewhere or declared by other means. Consult the documentation of the specific store to find available options for that store. If the repository infrastructure does not find a declared query for the method at bootstrap time, it fails. -

CREATE_IF_NOT_FOUND(default) combinesCREATEandUSE_DECLARED_QUERY. It looks up a declared query first, and, if no declared query is found, it creates a custom method name-based query. This is the default lookup strategy and, thus, is used if you do not configure anything explicitly. It allows quick query definition by method names but also custom-tuning of these queries by introducing declared queries as needed.

6.4.2. Query Creation

The query builder mechanism built into Spring Data repository infrastructure is useful for building constraining queries over entities of the repository.

The mechanism strips the prefixes find…By, read…By, query…By, count…By, and get…By from the method and starts parsing the rest of it.

The introducing clause can contain further expressions, such as a Distinct to set a distinct flag on the query to be created.

However, the first By acts as delimiter to indicate the start of the actual criteria.

At a very basic level, you can define conditions on entity properties and concatenate them with And and Or.

The following example shows how to create a number of queries:

interface PersonRepository extends Repository<Person, Long> {

List<Person> findByEmailAddressAndLastname(EmailAddress emailAddress, String lastname);

// Enables the distinct flag for the query

List<Person> findDistinctPeopleByLastnameOrFirstname(String lastname, String firstname);

List<Person> findPeopleDistinctByLastnameOrFirstname(String lastname, String firstname);

// Enabling ignoring case for an individual property

List<Person> findByLastnameIgnoreCase(String lastname);

// Enabling ignoring case for all suitable properties

List<Person> findByLastnameAndFirstnameAllIgnoreCase(String lastname, String firstname);

// Enabling static ORDER BY for a query

List<Person> findByLastnameOrderByFirstnameAsc(String lastname);

List<Person> findByLastnameOrderByFirstnameDesc(String lastname);

}There are some general things to notice:

-

The expressions are usually property traversals combined with operators that can be concatenated. You can combine property expressions with

ANDandOR. You also get support for operators such asBetween,LessThan,GreaterThan, andLikefor the property expressions. -

The method parser supports setting an

IgnoreCaseflag for individual properties (for example,findByLastnameIgnoreCase(…)) or for all properties of a type that supports ignoring case (usuallyStringinstances — for example,findByLastnameAndFirstnameAllIgnoreCase(…)). -

You can apply static ordering by appending an

OrderByclause to the query method that references a property and by providing a sorting direction (AscorDesc). To create a query method that supports dynamic sorting, see “Section 6.4.4”.

6.4.3. Property Expressions

Property expressions can refer only to a direct property of the managed entity, as shown in the preceding example. At query creation time, you already make sure that the parsed property is a property of the managed domain class. However, you can also define constraints by traversing nested properties. Consider the following method signature:

List<Person> findByAddressZipCode(ZipCode zipCode);Assume a Person has an Address with a ZipCode.

In that case, the method creates the property traversal x.address.zipCode.

The resolution algorithm starts by interpreting the entire part (AddressZipCode) as the property and checks the domain class for a property with that name (uncapitalized).

If the algorithm succeeds, it uses that property.

If not, the algorithm splits up the source at the camel case parts from the right side into a head and a tail and tries to find the corresponding property — in our example, AddressZip and Code.

If the algorithm finds a property with that head, it takes the tail and continues building the tree down from there, splitting the tail up in the way just described.

If the first split does not match, the algorithm moves the split point to the left (Address, ZipCode) and continues.

Although this should work for most cases, it is possible for the algorithm to select the wrong property.

Suppose the Person class has an addressZip property as well.

The algorithm would match in the first split round already, choose the wrong property, and fail (as the type of addressZip probably has no code property).

To resolve this ambiguity you can use _ inside your method name to manually define traversal points.

So our method name would be as follows:

List<Person> findByAddress_ZipCode(ZipCode zipCode);Because we treat the underscore character as a reserved character, we strongly advise following standard Java naming conventions (that is, not using underscores in property names but using camel case instead).

6.4.4. Special parameter handling

To handle parameters in your query, define method parameters as already seen in the preceding examples.

Besides that, the infrastructure recognizes certain specific types like Pageable and Sort, to apply pagination and sorting to your queries dynamically.

The following example demonstrates these features:

Pageable, Slice, and Sort in query methodsPage<User> findByLastname(String lastname, Pageable pageable);

Slice<User> findByLastname(String lastname, Pageable pageable);

List<User> findByLastname(String lastname, Sort sort);

List<User> findByLastname(String lastname, Pageable pageable);

APIs taking Sort and Pageable expect non-null values to be handed into methods.

If you don’t want to apply any sorting or pagination use Sort.unsorted() and Pageable.unpaged().

|

The first method lets you pass an org.springframework.data.domain.Pageable instance to the query method to dynamically add paging to your statically defined query.

A Page knows about the total number of elements and pages available.

It does so by the infrastructure triggering a count query to calculate the overall number.

As this might be expensive (depending on the store used), you can instead return a Slice.

A Slice only knows about whether a next Slice is available, which might be sufficient when walking through a larger result set.

Sorting options are handled through the Pageable instance, too.

If you only need sorting, add an org.springframework.data.domain.Sort parameter to your method.

As you can see, returning a List is also possible.

In this case, the additional metadata required to build the actual Page instance is not created

(which, in turn, means that the additional count query that would have been necessary is not issued).

Rather, it restricts the query to look up only the given range of entities.

| To find out how many pages you get for an entire query, you have to trigger an additional count query. By default, this query is derived from the query you actually trigger. |

Paging and Sorting

Simple sorting expressions can be defined by using property names. Expressions can be concatenated to collect multiple criterias into one expression.

Sort sort = Sort.by("firstname").ascending()

.and(Sort.by("lastname").descending());For a more type-safe way of defining sort expressions, start with the type to define the sort expression for and use method references to define the properties to sort on.

TypedSort<Person> person = Sort.sort(Person.class);

TypedSort<Person> sort = person.by(Person::getFirstname).ascending()

.and(person.by(Person::getLastname).descending());6.4.5. Limiting Query Results

The results of query methods can be limited by using the first or top keywords, which can be used interchangeably.

An optional numeric value can be appended to top or first to specify the maximum result size to be returned.

If the number is left out, a result size of 1 is assumed.

The following example shows how to limit the query size:

Top and FirstUser findFirstByOrderByLastnameAsc();

User findTopByOrderByAgeDesc();

Page<User> queryFirst10ByLastname(String lastname, Pageable pageable);

Slice<User> findTop3ByLastname(String lastname, Pageable pageable);

List<User> findFirst10ByLastname(String lastname, Sort sort);

List<User> findTop10ByLastname(String lastname, Pageable pageable);The limiting expressions also support the Distinct keyword.

Also, for the queries limiting the result set to one instance, wrapping the result into with the Optional keyword is supported.

If pagination or slicing is applied to a limiting query pagination (and the calculation of the number of pages available), it is applied within the limited result.

Limiting the results in combination with dynamic sorting by using a Sort parameter lets you express query methods for the 'K' smallest as well as for the 'K' biggest elements.

|

6.4.6. Repository Methods Returning Collections or Iterables

Query methods that return multiple results can use standard Java Iterable, List, Set.

Beyond that we support returning Spring Data’s Streamable, a custom extension of Iterable, as well as collection types provided by Vavr.

Using Streamable as Query Method Return Type

Streamable can be used as alternative to Iterable or any collection type.

It provides convenience methods to access a non-parallel Stream (missing from Iterable), the ability to directly ….filter(…) and ….map(…) over the elements and concatenate the Streamable to others:

interface PersonRepository extends Repository<Person, Long> {

Streamable<Person> findByFirstnameContaining(String firstname);

Streamable<Person> findByLastnameContaining(String lastname);

}

Streamable<Person> result = repository.findByFirstnameContaining("av")

.and(repository.findByLastnameContaining("ea"));Returning Custom Streamable Wrapper Types

Providing dedicated wrapper types for collections is a commonly used pattern to provide API on a query execution result that returns multiple elements. Usually these types are used by invoking a repository method returning a collection-like type and creating an instance of the wrapper type manually. That additional step can be avoided as Spring Data allows to use these wrapper types as query method return types if they meet the following criterias:

-

The type implements

Streamable. -

The type exposes either a constructor or a static factory method named

of(…)orvalueOf(…)takingStreamableas argument.

A sample use case looks as follows:

class Product { (1)

MonetaryAmount getPrice() { … }

}

@RequiredArgConstructor(staticName = "of")

class Products implements Streamable<Product> { (2)

private Streamable<Product> streamable;

public MonetaryAmount getTotal() { (3)

return streamable.stream() //

.map(Priced::getPrice)

.reduce(Money.of(0), MonetaryAmount::add);

}

}

interface ProductRepository implements Repository<Product, Long> {

Products findAllByDescriptionContaining(String text); (4)

}| 1 | A Product entity that exposes API to access the product’s price. |

| 2 | A wrapper type for a Streamable<Product> that can be constructed via Products.of(…) (factory method created via the Lombok annotation). |

| 3 | The wrapper type exposes additional API calculating new values on the Streamable<Product>. |

| 4 | That wrapper type can be used as query method return type directly. No need to return Stremable<Product> and manually wrap it in the repository client. |

Support for Vavr Collections

Vavr is a library to embrace functional programming concepts in Java. It ships with a custom set of collection types that can be used as query method return types.

| Vavr collection type | Used Vavr implementation type | Valid Java source types |

|---|---|---|

|

|

|

|

|

|

|

|

|

The types in the first column (or subtypes thereof) can be used as quer method return types and will get the types in the second column

used as implementation type depending on the Java type of the actual query result (third column).

Alternatively, Traversable (Vavr the Iterable equivalent) can be declared and we derive the implementation class

from the actual return value, i.e. a java.util.List will be turned into a Vavr List/Seq, a java.util.Set becomes a Vavr LinkedHashSet/Set etc.

6.4.7. Null Handling of Repository Methods

As of Spring Data 2.0, repository CRUD methods that return an individual aggregate instance use Java 8’s Optional to indicate the potential absence of a value.

Besides that, Spring Data supports returning the following wrapper types on query methods:

-

com.google.common.base.Optional -

scala.Option -

io.vavr.control.Option

Alternatively, query methods can choose not to use a wrapper type at all.

The absence of a query result is then indicated by returning null.

Repository methods returning collections, collection alternatives, wrappers, and streams are guaranteed never to return null but rather the corresponding empty representation.

See “Repository query return types” for details.

Nullability Annotations

You can express nullability constraints for repository methods by using Spring Framework’s nullability annotations.

They provide a tooling-friendly approach and opt-in null checks during runtime, as follows:

-

@NonNullApi: Used on the package level to declare that the default behavior for parameters and return values is to not accept or producenullvalues. -

@NonNull: Used on a parameter or return value that must not benull(not needed on a parameter and return value where@NonNullApiapplies). -

@Nullable: Used on a parameter or return value that can benull.

Spring annotations are meta-annotated with JSR 305 annotations (a dormant but widely spread JSR).

JSR 305 meta-annotations let tooling vendors such as IDEA, Eclipse, and Kotlin provide null-safety support in a generic way, without having to hard-code support for Spring annotations.

To enable runtime checking of nullability constraints for query methods, you need to activate non-nullability on the package level by using Spring’s @NonNullApi in package-info.java, as shown in the following example:

package-info.java@org.springframework.lang.NonNullApi

package com.acme;Once non-null defaulting is in place, repository query method invocations get validated at runtime for nullability constraints.

If a query execution result violates the defined constraint, an exception is thrown.

This happens when the method would return null but is declared as non-nullable (the default with the annotation defined on the package the repository resides in).

If you want to opt-in to nullable results again, selectively use @Nullable on individual methods.

Using the result wrapper types mentioned at the start of this section continues to work as expected: An empty result is translated into the value that represents absence.

The following example shows a number of the techniques just described:

package com.acme; (1)

import org.springframework.lang.Nullable;

interface UserRepository extends Repository<User, Long> {

User getByEmailAddress(EmailAddress emailAddress); (2)

@Nullable

User findByEmailAddress(@Nullable EmailAddress emailAdress); (3)

Optional<User> findOptionalByEmailAddress(EmailAddress emailAddress); (4)

}| 1 | The repository resides in a package (or sub-package) for which we have defined non-null behavior. |

| 2 | Throws an EmptyResultDataAccessException when the query executed does not produce a result.

Throws an IllegalArgumentException when the emailAddress handed to the method is null. |

| 3 | Returns null when the query executed does not produce a result.

Also accepts null as the value for emailAddress. |